AgentScope vs LangChain vs CrewAI — Framework Comparison

All Four Frameworks Now Support MCP and A2A -- So What Actually Differentiates Them?

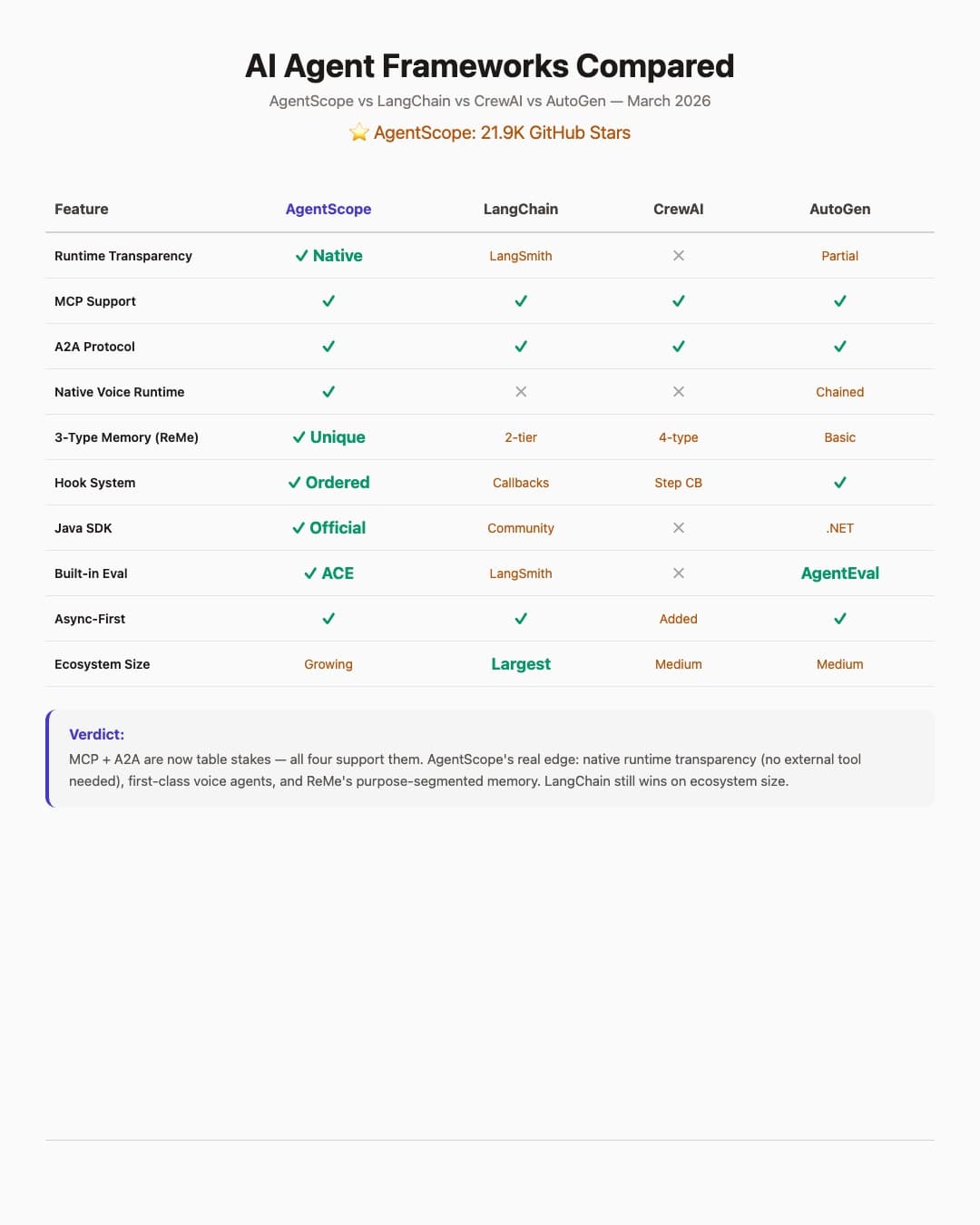

Protocol convergence happened faster than anyone expected. MCP (Model Context Protocol) and A2A (Agent-to-Agent) support have landed across AgentScope, LangChain, CrewAI, and AutoGen. The "which framework supports the standard" question is settled. The real differentiator is now architecture, developer experience, and what each framework gives you that the others can't.

I've shipped production systems with LangChain/LangGraph and evaluated the other three extensively. Here's the honest breakdown.

AgentScope -- 21,948 Stars, Alibaba Tongyi Lab

AgentScope's architecture is built around message-passing with first-class distributed deployment. Agents communicate through standardized message objects and can run on different machines via RPC with an actor-based concurrency model.

What makes it unique:

- Native runtime transparency -- built-in observability dashboard, no separate product needed. LangChain requires LangSmith (a separate paid product) for equivalent visibility

- ReMe 3-type memory system -- personal memory, task memory, and tool memory. This isn't just short-term/long-term -- it models memory the way humans actually use context

- Native voice/realtime runtime -- speech-enabled agents without bolting on a separate TTS/STT pipeline

- Official Java SDK (2.2K stars) -- the only framework with a production-grade JVM option for enterprise teams that aren't all-in on Python

The tradeoff: Smaller ecosystem, documentation gaps for advanced use cases, and the distributed features add complexity even when you're running on a single machine.

LangChain / LangGraph

LangGraph models agents as state machines -- nodes are functions (LLM calls, tool execution, routing), edges define transitions. It's the most mature framework in the space with 400+ integrations.

Strengths:

- Ecosystem depth is unmatched -- vector stores, LLMs, tools, retrievers, all pre-built

- Battle-tested at scale with thousands of production deployments

- LangSmith provides serious observability (tracing, evaluation, datasets)

The tradeoff: LangSmith is a separate product with its own pricing -- runtime transparency is not native. The abstraction layers are deep enough that simple tasks feel over-engineered. Breaking changes between versions have burned teams repeatedly. The graph DSL has a real learning curve for developers used to imperative code.

CrewAI

CrewAI's mental model is role-based: define "crews" of agents with roles, goals, and backstories. Agents collaborate through delegation, mirroring how human teams operate.

Strengths:

- Fastest time-to-prototype for multi-agent scenarios

- 4 memory types (short-term, long-term, entity, contextual) with async support

- MCP and A2A support for cross-framework interop

- Step callbacks for intercepting agent execution at each stage

- Clean YAML configuration for non-developers

The tradeoff: Role descriptions are natural language, which means inconsistent behavior across runs. Delegation patterns can surprise you when agents route tasks in unexpected ways. Debugging a crew that's gone off-script requires patience.

AutoGen

Microsoft's entry models agents as structured conversations with strong human-in-the-loop patterns.

Strengths:

- Hooks system for intercepting and modifying agent behavior at runtime

- AgentEval for systematic agent performance evaluation

- MCP and A2A support

- Best choice for supervised workflows where a human needs to approve agent decisions

The tradeoff: The conversation-centric model feels restrictive for non-chat workflows. AutoGen 0.4 was a significant rewrite that fragmented the community. Setup complexity is high for simple use cases.

Decision Matrix

| Factor | LangChain/LangGraph | CrewAI | AgentScope | AutoGen |

|---|---|---|---|---|

| Production readiness | High | Medium | Medium | Medium |

| Time to prototype | Medium | Fast | Medium | Medium |

| Distributed agents | Limited | No | Native | Limited |

| Observability | LangSmith (separate) | Step callbacks | Native dashboard | Hooks |

| Memory system | Basic | 4 types | ReMe 3-tier | Conversation |

| Java SDK | No | No | Yes (2.2K stars) | No |

| Voice runtime | No | No | Native | No |

| MCP + A2A | Yes | Yes | Yes | Yes |

The Actual Recommendation

If you need production reliability and ecosystem breadth: LangChain/LangGraph. Nothing else has 400+ integrations.

If you need a working demo by Friday: CrewAI. The role-based model maps to product demos beautifully.

If you're building distributed, observable, multi-modal agent systems: AgentScope. The native runtime transparency, ReMe memory, voice runtime, and Java SDK are edges no other framework has matched.

If your workflow requires human approval gates: AutoGen.

The hard question none of these frameworks have answered yet: what happens when you need agents from different frameworks to collaborate in production? MCP and A2A provide the protocol layer, but the orchestration layer above it is still everyone's custom code.